[Template] Operator's AI Validation Playbooks

Practical suggestions on how to implement human validation systems in your organization

Building on the Foundation

In our last article, we explored why humans must serve as the final verification layer, since meta-prompts alone can’t eliminate AI hallucinations. Operational professionals are well-positioned to establish systematic infrastructure that makes validation routine and reliable across organizations.

Common Validation Pitfalls

Before diving into playbooks, recognize these four critical traps that undermine even well-intentioned validation efforts:

The Confirmation Bias Trap

Problem: When AI generates content that supports your viewpoint, you’re more likely to accept it without verification.

Solution: Always verify supporting evidence as rigorously as contradictory evidence. Assign someone to play “devil’s advocate” and challenge AI-generated claims. Create a policy requiring extra scrutiny for information that perfectly aligns with your position.

The Authority Halo Effect

Problem: Well-formatted citations and professional-sounding sources can create a false sense of credibility.

Solution: Judge sources by their actual authority, not their appearance. A perfectly formatted fake citation is still fake. Train teams to recognize the difference between authoritative sources and content that appears to be sophisticated but is unreliable. Check against the source.

The Time Pressure Excuse

Problem: Deadlines don’t justify skipping verification, but they often lead to shortcuts.

Solution: Build validation time into project timelines. It’s better to deliver late than to deliver false information. Create “validation buffers” in project schedules and establish emergency protocols for rush situations.

The “AI Said So” Fallacy

Problem: Treating AI as an authoritative source rather than a research assistant. This becomes even harder now that AI-generated answers often appear as the default search results.

Solution: Never cite AI as a source. Always trace claims back to their original sources. Use credible and respected sources, especially for research. Establish clear policies that AI-generated content must be independently verified before publication or submission.

The Operator’s Role in Developing AI Validation

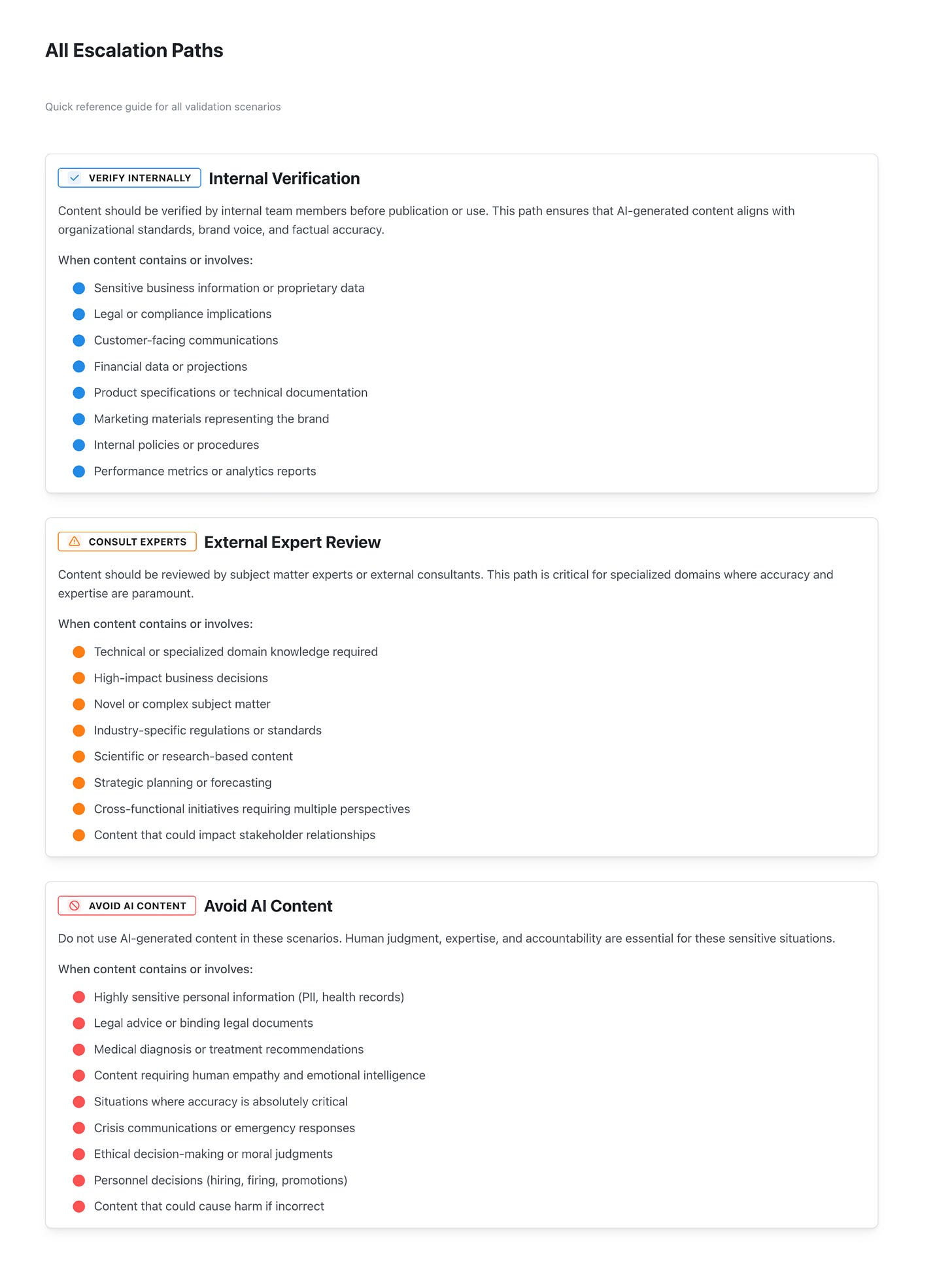

Operators can lead by creating clear policies, review processes, training, monitoring protocols, and defined escalation paths. Here is an example I am using with the playbook builder:

Continue reading to explore detailed options for operators, and use the app to create your own customized organizational plan.