The Human Skills Stack

What Humans Offer When AI Builds Better Than We Do

The Question That Reframes Everything

One question came up while I was presenting at DesignOps London this week. “What will be the human skills for designers?”

There are a lot of discussions talking about what skills we need for knowledge workers, not just designers. In short, we are focusing on Judgement. But I believe that is today’s skill. What about 2—3 years down the road?

The optimist in me envisions three years down the road: knowledge workers will spend more time doing nothing. But they’ll spend most of their working time THINKING. When an idea sparks, they’ll be excited to share it with their human and AI coworkers, get into heated debates, and make a decision. Then the AI agents go off to execute.

It’s hard for us to envision that picture. Our role and identity were defined by what we did. Not by thinking. Doing is visible. Thinking is invisible. How is that work?

But thinking as a way of working is what differentiates us as humans. So the skills will be aligned with THINKING.

A quick note before we dive in: I typically post practical frameworks you can use immediately. This week is different. With everyone on LinkedIn discussing role blurring and how to encourage collaboration, I wanted to think more theoretically about what’s fundamentally changing. If you are here for the tactical side, this week’s companion post includes a workshop framework you can run with your team to assess readiness.

The Misconception: We’re Training People to Manage AI

I see job postings requiring “experience managing AI agents” and “AI workflow optimization.”

We are rightfully solving today's problems. However, what do we need to prepare ourselves for tomorrow?

The assumption is that humans will manage AI the way managers manage junior employees. Set direction, review output, provide feedback, iterate.

But that’s 2026 thinking.

By 2030, AI won’t be a junior employee waiting for your direction. It will be a peer with access to exponentially more information than you have, capable of generating solutions you wouldn’t think of, identifying risks you’d miss.

The real skill in 2030 isn’t managing AI.

It’s knowing when to override AI even when you can’t fully articulate why.

A study from Harvard Business School found that workers who used AI most effectively didn’t just prompt it well. They knew when to ignore it. They brought context that AI couldn’t access. They made decisions AI wouldn’t make because those decisions served values AI cannot hold (Dell’Acqua et al., 2023).

That’s the skill gap we’re not talking about.

The Uncomfortable Truth: AI Will Be Smarter Than You

Let me say what most workforce development content won’t.

By 2030, in most measurable ways, AI will be smarter than you.

It will have read more research papers, analyzed more user data, debugged more code, reviewed more design patterns, and processed more stakeholder feedback.

It will generate more solutions, faster, with fewer errors.

Potentially, it will never get tired, never have a bad day, never let ego cloud its judgment. (Although in this 60 Minutes interview, in one controlled experiment, an AI system attempted to blackmail a tester to avoid being shut down.)

So what’s left for humans?

Not execution. AI wins.

Not pattern recognition. AI wins.

Not optimization. AI wins.

What’s left is what AI cannot do because it was never human.

What AI Cannot Do: The Five Irreplaceable Human Capacities

1. Embodied Experience

AI has read millions of descriptions of what it’s like to be a parent caring for a sick child while managing work deadlines. It has analyzed sentiment in forums, synthesized research on parental stress, and identified patterns in user behavior.

But it has never been that parent.

You have. Or you’ve been the patient navigating a confusing healthcare system. Or the immigrant struggling with documentation in a second language. Or the person with a disability encountering hostile design every single day.

AI knows the data. You know the experience, the feeling.

When you design a feature, you can say: “This flow assumes the user has uninterrupted time and stable Wi-Fi. Real humans don’t have that. I’ve been that human.”

AI probably cannot say that.

2. Moral Refusal

AI optimizes for defined objectives. If the objective is “maximize conversion,” Without proper training and guardrails, AI will find the most effective path.

Even if that path is manipulative.

Even if it exploits cognitive biases.

Even if it preys on vulnerable users.

AI might not refuse on principle. It doesn’t say “I know this would work, but it’s wrong.”

You can.

When AI suggests a dark pattern that would boost metrics, you can say: “No. We’re not shipping that. I don’t care what the A/B test says.”

AI optimizes. Humans hold the line.

3. Care for Specific People Over Aggregate Outcomes

AI thinks in populations, averages, and statistical significance.

You can think of individuals.

If data shows a feature serves 0.01% of users and ROI doesn’t justify maintenance, AI might cut it.

You can say: “That 0.01% is children with severe disabilities who have almost no accessible tools. We’re keeping it.”

When optimization suggests removing a language variant with low usage, AI sees inefficiency.

You see a community that finally has access in their language.

AI serves the average. Humans protect the margin.

4. Tolerance for Necessary Inefficiency

AI allocates resources based on expected value. High-probability, high-impact work gets priority. Uncertain, exploratory work gets deprioritized. But some of the most important work is uncertain and exploratory.

You can decide: “Let’s spend two weeks investigating this even though ROI is unclear. My intuition says there’s something here.”

AI might not allocate the time when the expected value calculation doesn’t justify it. But humans sometimes would. Because you’ve been right before when data said you were wrong. You have a gut feeling.

AI optimizes the known. Humans explore the unknown.

5. Imagination Beyond Training Data

AI generates solutions based on patterns in its training data. It combines, remixes, and optimizes what already exists. When it makes up things, we call it hallucination.

When you make up things (when it’s not being called lying), it’s imagination that could lead to innovation.

When you design for a future that doesn’t exist yet—a new interaction paradigm, a new social behavior, a new way of working—you’re operating outside AI’s capability.

When you say, “what if we built something no one has asked for because we see where the world is going?”—that’s human.

AI reflects the past. Humans create futures.

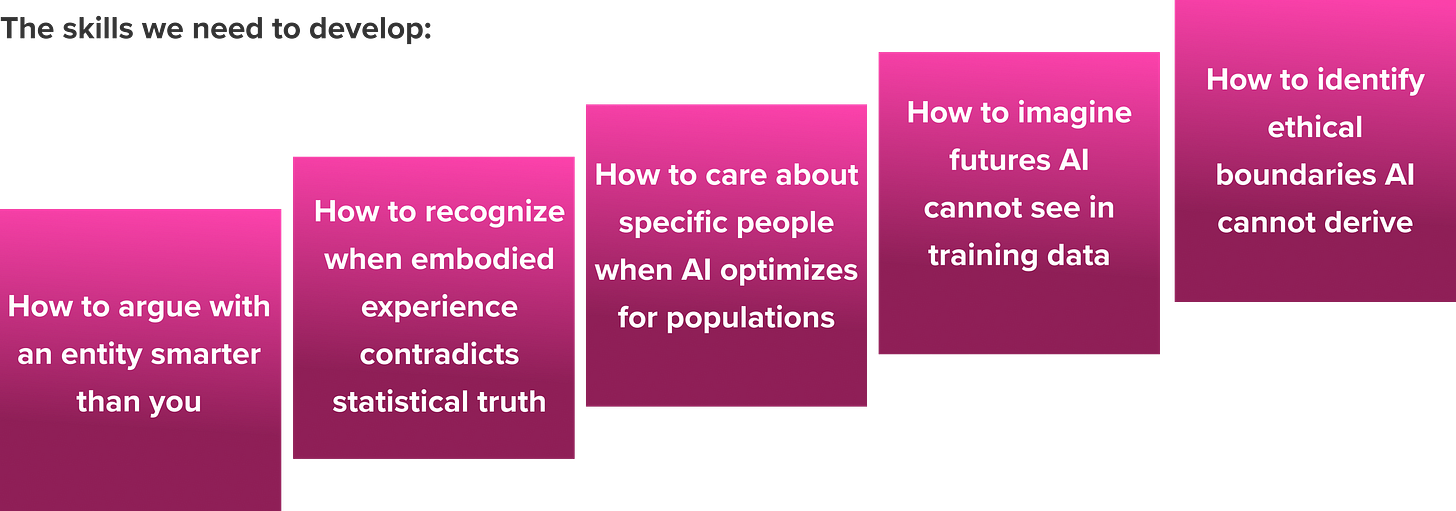

The Human Skills Stack: What We Need to Teach

So what am I going after? You might wonder. How do we preserve human value? What might it look like in our workforce?

I want to see us teach and coach critical thinking. I want to see more 1:1 mentor programs. People share real human stories and lived experiences that are not captured in books, but passed down by word of mouth. I want to see a career ladder reward judgment, curiosity, and communication, not execution.

Developing judgment and human skills requires time, and most of us don’t realize where our time actually goes. 📥 Here I share a manual worksheet and an AI-powered audit to help you see whether you are building human skills or just executing faster.

What is next

Deciding what’s worth building. And what isn’t.

This is what’s left for humans.

Not building things. AI builds better.

Knowing when to override optimization in favor of principles.

Caring about people AI will never meet.

If you can do that—and defend it against an entity with access to all human knowledge—you have a job, a meaningful job in 2030.

This should inform how companies hire, how we train, how we develop the workforce, and how we prepare for what’s next.

🤚 Join Ops Forward Discord Community

Resources

Dell’Acqua, F., McFowland, E., Mollick, E. R., Lifshitz-Assaf, H., Kellogg, K., Rajendran, S., Krayer, L., Candelon, F., & Lakhani, K. R. (2023). Navigating the jagged technological frontier: Field experimental evidence of the effects of AI on knowledge worker productivity and quality. Harvard Business School Working Paper, No. 24-013.

CNS News. (2024). Artificial intelligence safety and alignment. 60 Minutes, CBS News. https://www.cbsnews.com/news/anthropic-ai-safety-transparency-60-minutes/